Example

Stop Answering Questions. Start Redesigning Them.

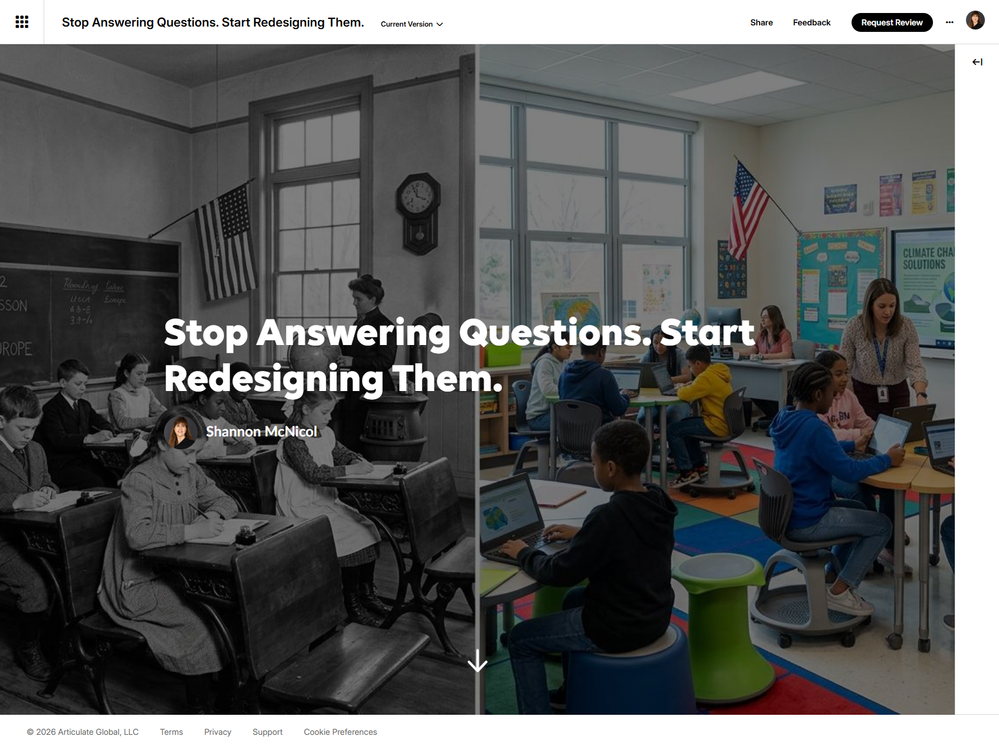

This week's E-Learning Heroes challenge dropped a fascinating artifact in our laps: the 1912 Bullitt County Schools eighth-grade final exam. And everyone's first instinct was the same: could I pass this thing?

And honestly — maybe not. There's a grammar question asking students to write a single sentence containing four specific grammatical elements simultaneously.

There's compound interest math done by hand. There's a civics question — "What is the proper basis of civil government?" — with no word limit, no rubric, and apparently no fear of what a fourteen-year-old might actually write.

It's a fascinating artifact. And the obvious eLearning move would be to modernize it into an assessment. Swap the open-ended questions for multiple choice. Add a scenario. Slap on some feedback. Done.

I went a different direction.

What if the learner was the designer?

Instead of asking people to answer the 1912 questions, we asked them to redesign them.

Pick a question. Tag the instructional design strategies you'd apply — scenario-based, cause-and-effect, perspective-taking, transfer task, twenty options in total.

Watch a live compatibility meter tell you whether your combination is a strong pairing or a bit of an overcrowded mess.

Then get feedback: a scorecard rating your approach on cognitive load, authenticity, and transfer potential, plus a specific strength, a push-it-further nudge, and one genuinely hard reflective question to sit with.

And at the end — a before/after reveal comparing the original 1912 question to an expert redesign, so you have something concrete to react to, agree with, or argue against.

The whole thing runs in a single HTML file embedded in Rise via a Code Block. No frameworks, no dependencies.

Why this framing works

Most eLearning about instructional design is passive. You read about Bloom's taxonomy. You watch someone explain cognitive load theory. You take a quiz about it afterward.

This puts you in the seat. You make a call — I'm going to apply scenario-based learning and cause-and-effect framing to this arithmetic problem — and then you get a mirror held up to that decision. The compatibility meter alone has a way of making you pause and ask whether you're building a coherent design rationale or just stacking strategies because they sound good.

The 1912 exam is the perfect raw material for this because the questions are so far from modern practice that every design decision feels deliberate. You can't default to habit. You have to think.

Give it a try below and let me know — which question did you pick, and did the compatibility meter surprise you?

Built with Articulate Rise 360 and Claude by Anthropic.